Penpot

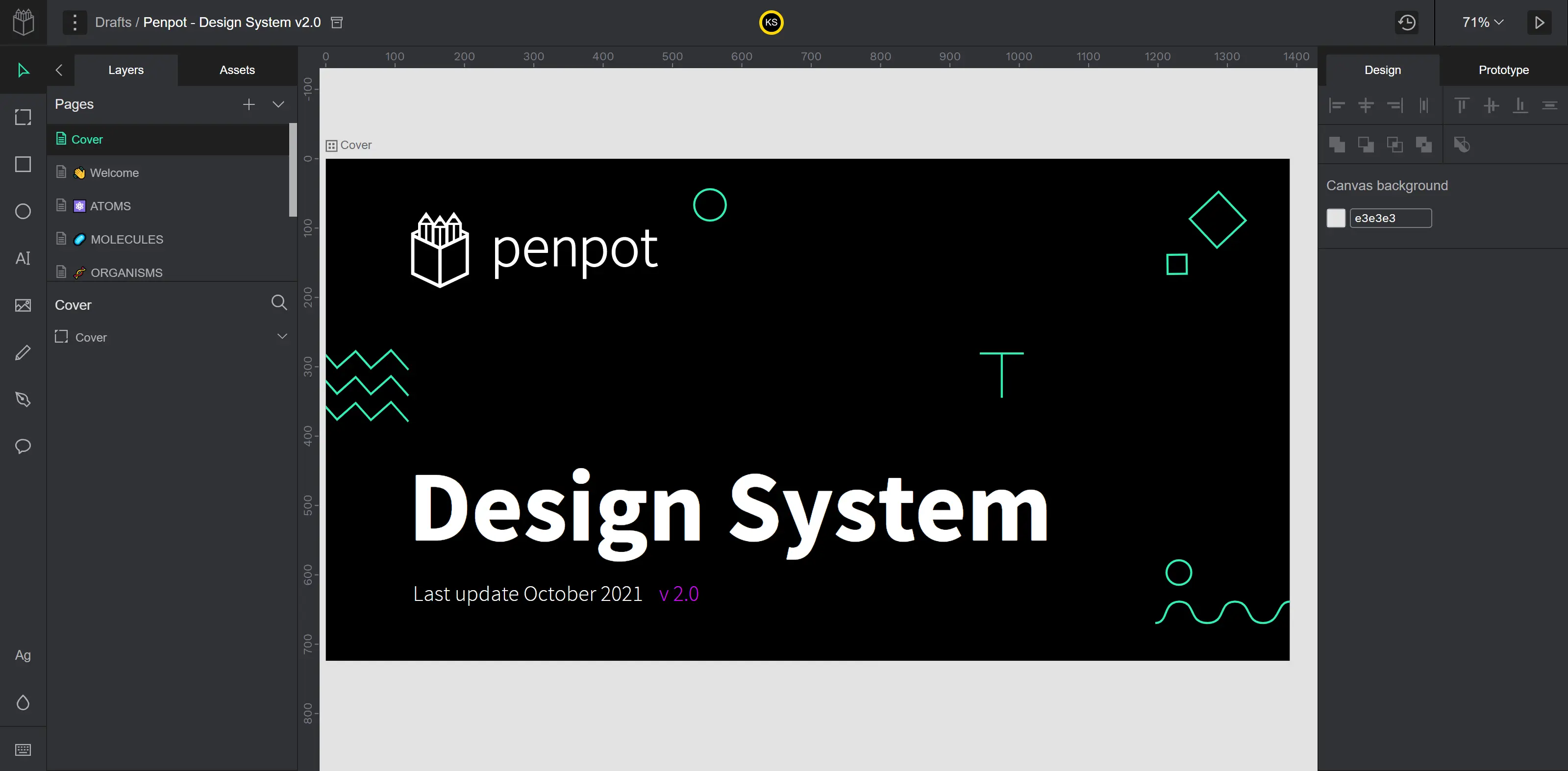

Penpot is an open-source design and prototyping platform that bridges the gap between designers and developers. It’s browser-based, multiplayer, and collaborative. The app offers reusable components, flex-layout, fonts management, interactive prototyping, and dev tools like code and properties inspectors, and much more.

All CustomersWeb App Testing

We helped Penpot thoroughly polish their web app before the official launch, making sure users could benefit from all the great features without any distractions or obstacles. We tested the existing and new functionality, ensuring nothing detracted from the product’s value.

Learn moreAutomated Testing

We helped Penpot identify areas suitable for test automation and executed its implementation from the ground up. We automated a decent number of regression tests, which helped Penpot catch even more critical bugs before the build was deployed and shipped to users.

Learn moreChallenge

Penpot turned to QAwerk to make sure they could launch their product to the general public within the set timeframe. They wanted their in-house team to be laser-focused on developing value-adding features and polishing the product functionality rather than worrying about bugs and ways to capture them.

Here is a brief overview of the things we were expected to deliver:

- Devise test strategy & plan. Before partnering with QAwerk, the Penpot team reported bugs with the help of internal team members and their large community of beta version users. With Penpot’s growth in popularity and intention to remove the beta label from their app, this approach was no longer sustainable. That’s why we were asked to plan the entire testing effort and establish proper QA workflows to lay the foundation for ongoing professional testing in the future.

- Go from beta to official release. Eliminating bugs and improving performance before releasing the product to the public as commercially ready was paramount for Penpot. Our task here was to methodically go through all the existing functionality and find as many issues as possible to ensure no critical bugs were still in the prod and the app runs without lags or data-saving problems.

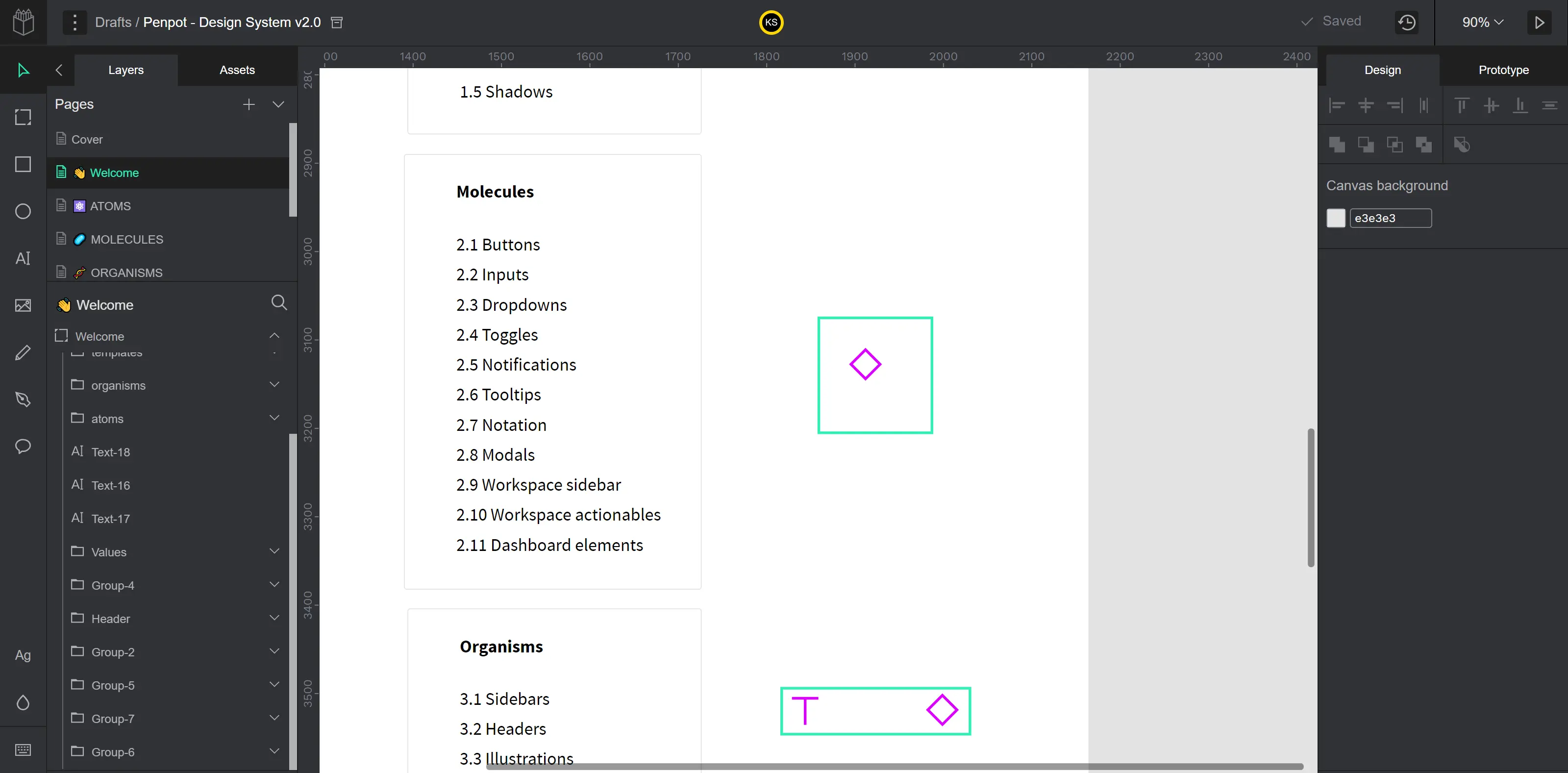

- Polish new functionality. The production release included some long-awaited killer features, such as flexible interfaces, accessing code right from the workspace area, and accessibility improvements, among other things. Our task was to make sure these upgrades were integrated seamlessly, caused no disruptions to the existing functionality, and made users genuinely excited about using Penpot.

Besides that, we wanted to help Penpot achieve better coverage and release updates with peace of mind. So we offered them to enhance manual testing with test automation.

Solution

When we just started testing, we encountered a great deal of internal server errors that would appear quite randomly, and it wasn’t clear what caused this behavior initially. These bugs required immediate fixing since users experiencing them could lose their work.

So we would record videos to capture the exact steps to reproduce this type of bug, trying to find a common pattern between such occurrences and figure out what user actions could have triggered the errors and what app areas are most prone to them. We would share our findings with Penpot’s developers, and eventually, they eradicated this problem entirely.

Our testing effort included several testing types, such as:

- Functional Testing. We checked if the user authorization went smoothly. We thoroughly tested the user profile, dashboard, and workspace modules. We made sure all changes were applied to the design without any lags, especially during collaborative editing.

- Regression Testing. The Penpot team continuously ships new features and upgrades of the existing features, which necessitates regular regression testing. We combine both manual and automated regression testing to achieve the best coverage and consistent results.

- UI Testing. The sleek visual appearance of the user interface is paramount for any modern app, especially for a design tool like Penpot used by UI/UX and product professionals at large corporations. We checked element positioning and alignment on every interface and ensured UI patterns were consistent throughout the app.

- Compatibility Testing. Our QA engineers tested Penpot in three major browsers – Firefox, Chrome, and Safari. We tested Penpot in several Safari versions, as some bugs appeared only in older versions. We also used three different operating systems, Windows, macOS, and Linux, to ensure all users can benefit from Penpot regardless of their devices.

As for test automation, we primarily focused on regression test cases.

Test Automation

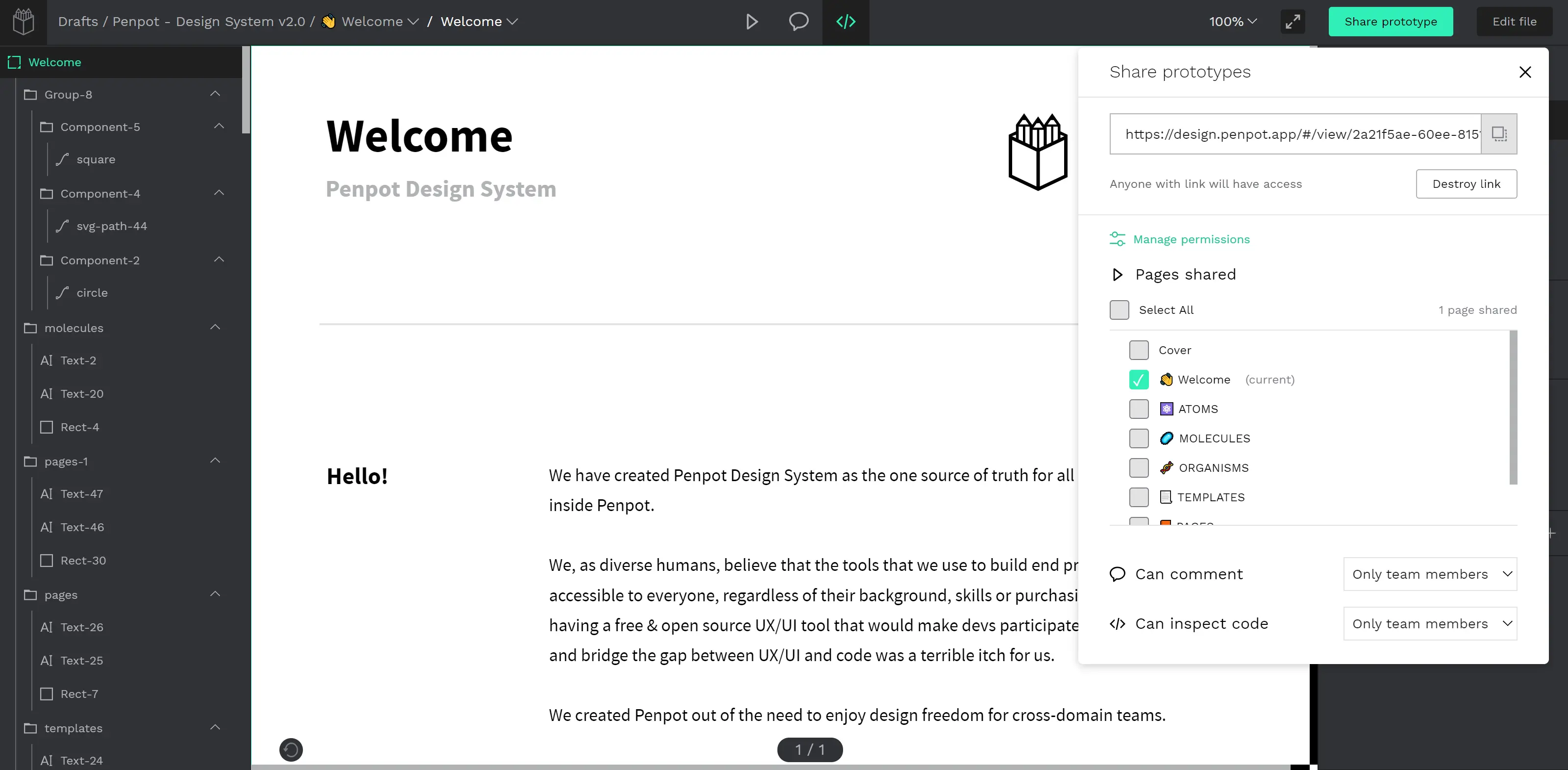

Test automation was performed using the Playwright framework. Playwright is designed to simplify cross-browser and cross-platform automation, so it suits our needs well.

When selecting test cases for automation, we followed two rules: test cases should be of high impact, and their preparation shouldn’t consume too much time. This way, you can see a positive ROI.

For example, we didn’t automate cases like sign-up or forgot password because here you have to deal with third-party APIs such as Gmail, and automating this flow is not worth the effort. Or cases with drag-and-drop or copy-paste actions because these are not always handled correctly by Playwright and tests can be unstable.

We automated test cases like login, creating and deleting files, opening and renaming files, creating and deleting boards, adding blur to boards, changing the background color, among many others. They are available in Penpot’s public repository.

We also leveraged Playwright’s visual comparisons for snapshot testing. To minimize the number of failed tests because of UI changes, we used screenshots that captured only a single element or section, such as a created shape. This way, if new sections were added to the design panel, they didn’t affect the test stability.

About 10% of our test cases require capturing the entire screen, for example, when we need to check not only the layers but also the toolbar. Here we used masking to ignore elements with dynamic content, such as timestamps or usernames. Even though it’s impossible to eliminate false positives completely, updating such test cases is easy and quick.

See How We Did It

Bugs Found

Most bugs we reported were associated with changes not applied while editing a file collaboratively, elements overlapping, overflowing text, and internal server errors.

We also detected a major compatibility issue when the app was unavailable for users opening Penpot in Safari versions older than 16. Even though these particular versions weren’t common among Penpot users, the issue was fixed the same day.

Actual result: The copied and pasted shape is not visible on the board.

Expected result: The copied and pasted shape is visible.

Actual result: ‘Internal Error’ appears; the bug is still reproducible after the page reload.

Expected result: The user can drag layers.

Actual result: Upon clicking the ‘Ungroup’ RMB menu item for the group of typographies on the ‘Assets’ panel, nothing happens; the group remains.

Expected result: The group is removed; the font is moved to the root of the “Typographies” section.

Result

Delegating all testing effort to QAwerk allowed Penpot to fully prepare their product for the official release and implement several killer features to delight users on the launch day. With our help, Penpot detected showstoppers and many other critical bugs and fixed them before they affected numerous users. With our joint effort, Penpot has transformed into a robust and stable platform that allows users to stay creative and focused on their work without any distractions.

We laid a solid foundation for continuous professional testing in the future and continue supporting Penpot in their mission of bringing innovative open-source solutions and improving collaboration between cross-domain teams.

Awarded

Fast Company Innovation by Design Awards

Read More

Fast Company Innovation by Design Awards

Read More

Tech Talk

In Press

Looking to perfect your product before major release?

Let’s talkTools

Playwright

Playwright BrowserStack

BrowserStackQAwerk Team Comments

Aliaksei

QA automation engineer

Creating automated test cases was sometimes challenging, given the product’s nature - complex interactions with objects and few unique locators when creating elements in the working space. But I love a good challenge, and I learned how to use Playwright to its full potential. I also discovered a few nuances about snapshot testing. Collaborating with Penpot’s teammates and leadership was a true pleasure, too - highly responsive, attentive to every bug, and appreciative of our suggestions.

Oleh

QA engineer

I'm very happy I've contributed to Penpot's official release, which was a success and the result of hard work on both sides. We've written a ton of test cases and uncovered numerous severe bugs, which positively affected the app's stability. Thanks to this project, I gained experience running Linux in VirtualBox and tried out a new bug-tracking tool, Taiga.

Related in Blog

Alpha Testing vs Beta Testing: A Complete Comparison

Both alpha and beta testing are forms of user acceptance testing, allowing to build confidence before the product launch. Both of them help collect actionable feedback and increase product usability. However, d...

Read More

How to Write Test Cases: QAwerk’s Comprehensive Guide

Right from the start, we are set to announce that there is no single all-purpose test case type. However, there is an easy-to-follow set of practices and solutions that, when implemented properly, will result in a good one. We’ve put together the test case writing bes...

Read MoreImpressed?

Hire usOther Case Studies

Arctype

Achieved app stability and speeded up software releases by 20% with overnight testing and automation

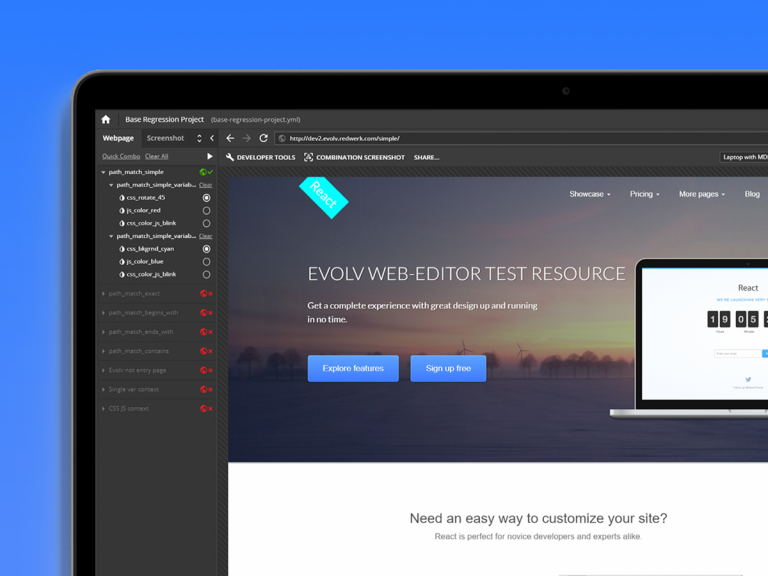

Evolv

Increased this digital growth platform’s regression-testing speed by 50%, and ensured the platform runs optimally 24/7

Keystone

Helped Norway’s #1 study portal improve 8 of their content-heavy websites, which are used by 110 million students annually