Couple Up! Love Show Story

Native Games Studio is an indie game developer specializing in mobile interactive story games. Established in 2020 with a team of three, it has grown to a small studio that released Couple Up! – love reality show simulation for iOS and Android players.

All CustomersGame Testing

We tested the backend of the Couple Up! mobile game by performing load testing, auditing server configurations, and conducting an in-depth review of the API codebase. Our expert suggestions will help them fix bottlenecks and provide uninterrupted experiences for large numbers of players.

Learn morePerformance Testing

We helped our client identify test cases causing the server to struggle and respond with a significant delay. We outlined several areas to improve the performance – from adjusting database configurations to migrating to a different architecture and enhancing the API code quality.

Learn moreChallenge

Native Games Studio turned to QAwerk to address the challenges of a growing user base. They wanted to ensure the Couple Up! game wouldn’t be sluggish or crashy under a substantially increased influx of players.

Here is what we were expected to deliver:

- Load Testing. We needed to test several API endpoints, gradually increasing server requests and tracking the server response time. Load testing helps identify the app’s peak operating capacity and breaking point and make necessary adjustments before users experience any negative issues.

- Server Configuration Audit. Our task was to scrutinize their Hetzner server configurations, such as checking network bottlenecks, nginx configuration, auditing docker containers, kernel and service settings. This part was performed by our DevOps engineer.

- Code Review. We were also asked to review the server code and point out areas for improvement. We checked it against criteria like code quality, error handling, caching, and testability. Since the code was a REST API built with Flask, it was reviewed by our senior Python developer.

Before proceeding with the project, we provided the Couple Up! team with an estimation of our efforts to bring clarity and transparency to our cooperation. We also asked them to fill the gaps in the API documentation so that we had an accurate understanding of the underlying infrastructure.

Solution

Load Testing

We tested one GET and three POST API calls to measure their performance under varied load conditions. Load testing is usually done with the help of a tool that simulates different numbers of users interacting with your app simultaneously or sequential increase in the app’s demand. Our QA engineers chose Apache JMeter because it’s a reliable open-source tool, specifically designed to make load testing rapid yet effective.

One problem we faced was an improper description of API headers. The latter are vital in API testing because they contain metadata about the API request and response. They also help the API understand your request and retrieve the exact information in the needed format.

We solved this issue by writing a script that allowed us to describe the requests in JMeter correctly. Having analyzed the system, expected and peak load, we set respective test data parameters.

Here are the test cases we used:

- Add 1 thread (user) in 1 sec

- Add 100 threads (users) in 1 sec

- Add 1000 threads (users) in 10 sec

- Add 10000 threads(users) in 1000 sec

We measured the server’s performance in terms of response times over time, active threads over time, and internal server error rates.

Server Configuration Audit

Our server configuration audit aimed at identifying opportunities for improving the server’s performance. Since mongod processes cause the main CPU & RAM load on the server, their work should be optimized.

We provided the Couple Up! Team with a list of exact configurations that needed to be set in place. For example, we broke down how to tweak Linux kernel settings, such as max map count, swappiness, dirty_ratio, max number of queued connections, local port range, and several others, in relation to the anticipated load of 50K connections.

To reduce the CPU & RAM load, we advised our client to check how queries are formed and optimize those running slow and parallelize queries of the same type. We also suggested indexing and re-indexing routinely-queried fields and doing this on time. With the right indexing strategy, you can significantly increase your database performance.

Additionally, we mentioned what default configurations should be changed and how.

Code Review

The QAwerk team performed a comprehensive code review and provided Couple Up! with quick and cost-effective action steps as well as significant architectural changes for better performance and scalability long term.

We highlighted the areas in need of refactoring to help the Couple Up! developers maintain, add new business logic, and scale the code without pain in the future. We noted the need for unit and integration tests because they will make refactoring and expanding the code base easier.

Also, we recommended improving error handling so that the error messages were more informative and always showed correct status codes. This would simplify debugging and testing and help standardize the app’s communication with the API.

Proper caching can significantly boost performance for a fraction of the cost compared to migrating to Amazon. That’s why we offered our client to implement caching.

Last but certainly not least is the CI/CD pipeline. In case frequent releases are planned, CI/CD is essential for catching bugs before deploying updates and speeding up the delivery of new capabilities to users. So CI/CD setup was also on the list of our recommendations.

Bugs Found

Our load testing results revealed that the server struggled with handling a sharp increase in the number of users over a short time, resulting in the system imbalance, long response times, and internal server errors.

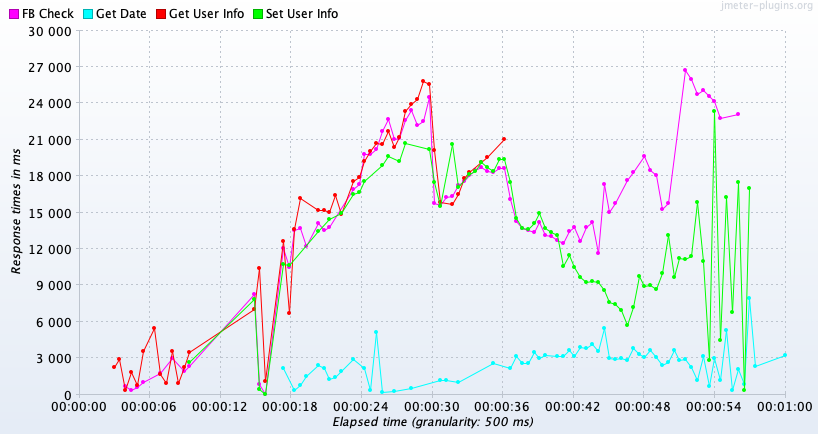

Actual result: When adding 1000 users in 10 seconds, most API requests take longer than 3 seconds to get processed, peaking at 27 seconds.

Expected result: The server response time should not exceed 3 seconds regardless of the test case performed.

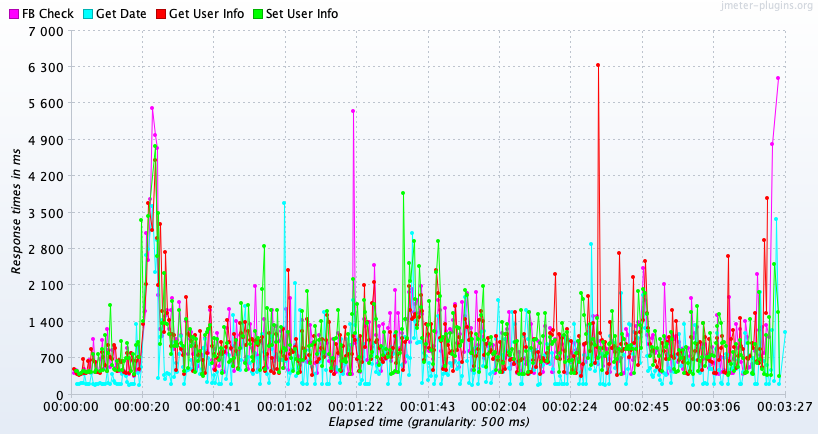

Actual result: When adding 10,000 users in 1000 seconds, most API requests have normal response times, yet we observe recurrent spikes, at times going over 6 seconds.

Expected result: There should be no drastic spikes in server response times; the line chart should tend to zero on the response times axis.

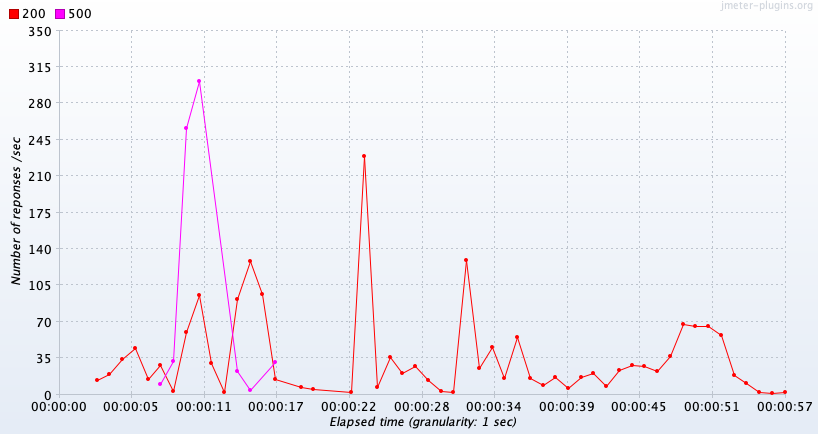

Actual result: The performance test report shows that 20% of server requests in the case of 1000 users added in 10 seconds return status code 500, an internal server error.

Expected result: The server error rate should tend to 0% to prevent loss of the players’ progress and ensure an immersive experience.

Result

QAwerk provided Couple Up! with a detailed load testing report and a step-by-step action plan to improve the server performance and reduce the number of errors. We deliberately broke down our recommendations into quick wins and major architecture changes. Quick wins are easy and cost-effective in implementation and bring immediate performance benefits. In contrast, the revamped cloud architecture would help to balance the load automatically and store data closer to the target audience, decreasing latency and eliminating other performance issues.

Since we provide managed services, the Couple Up! team received detailed feedback and expert advice from three specialists – our QA engineer, Python developer, and DevOps engineer. The insights we shared will help Native Games Studio prepare the Couple Up! game for the growing user base and develop new games with the right architecture from the very beginning.

Can your app handle sudden spikes in traffic?

Let’s talkTools

QAwerk Team Comments

Alexander

QA engineer

I performed API load testing for the selected requests using JMeter, one of the most stable and quality tools in this regard. I expanded my load testing expertise by using new metrics types and closely collaborating with developers to solve the issue with headers.

Anton

Developer

I conducted the code review of the project. My biggest concern was that the commonly used software design patterns and code quality principles were not followed, complicating the maintenance and new feature implementation. The best way out would be to rewrite the code and assign the job to a developer well-versed in Python.

Related in Blog

Top Mobile App Performance Testing Tools

Developers often view app performance testing as a necessary evil. It is undoubtedly a complicated, time-consuming, and costly process. But why is mobile performance testing so crucial? This question reminds me of my favorite quote by Ian Molyneaux: “If an end user percei...

Read More

Performance Testing Best Practices: What You Should Know?

Today, the functionality of a product is no doubt an important and integral component in the testing process. But there are response time, reliability, resource usage and scalability that do matter as well. Per...

Read MoreImpressed?

Hire usOther Case Studies

Unfold

We transformed this crash-prone storymaker into a full-blown content creation platform, which now has a billion users and was acquired by SquareSpace

Magic Mountain

We enabled the UK’s #1 social fitness app to transition from an MVP to a Premium offering, and their subscriptions are growing daily

Logo Maker Shop

Helped Logo & Brand Story Design App successfully debut on Google Play and quickly hit 10K installs